Locks, Actors, And Stm In Pictures

All programs with concurrency have the same problem.

Your program uses some memory:

When your code is single-threaded, there's just one thread writing to memory. You are A-OK:

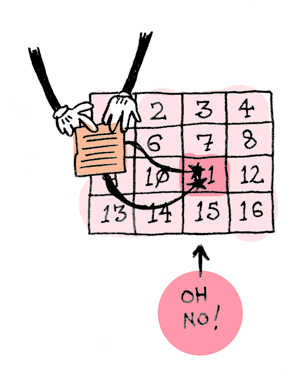

But if you have more than one thread, they could overwrite each others changes!

You have three ways of dealing with this problem:

- Locks

- Actors

- Software Transactional Memory

I'll solve a classic concurrency problem all three ways and we can see which way is best. I'm solving the Dining Philosophers problem. If you don't know it, check out part 1 of this post!

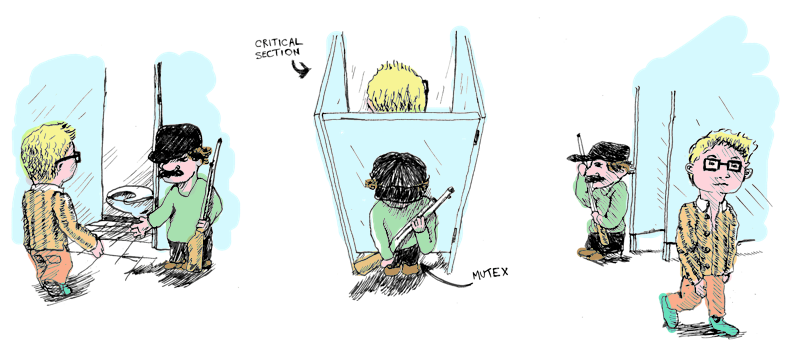

Locks

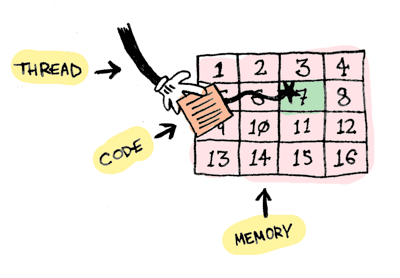

When your code accesses some memory, you lock it up:

mutex == the lock.

critical section == the code locked with a mutex.

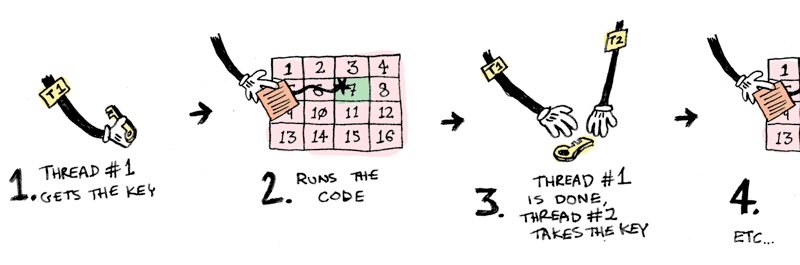

Now if a thread wants to run this code, he (or she) needs the key. So only one thread can run the code at a time:

Sweet! Only one thread can write to that memory at a time now. Problem solved! Right?

Here's a Ruby implementation of the resource hierarchy solution.

Each Philosopher gets a left and a right fork (both forks are mutexes):

class Philosopher

def initialize(name, left_fork, right_fork)

@name = name

@left_fork = left_fork

@right_fork = right_fork

end

Now we try to get the forks:

while true

@left_fork.lock

puts "Philosopher #@name has one fork..."

if @right_fork.try_lock

break

else

puts "Philosopher #@name cannot pickup second fork"

@left_fork.unlock

end

end

- A philosopher picks up fork 1. He waits till he has it (

lockwaits). - He tries to pick up fork 2, but doesn't wait (

try_lockdoesn't wait). - If he didn't get fork 2, he puts back fork 1 and tries again.

Full code here. Here's an implementation using a waiter instead.

Locks are super tricky to use. If you use locks, get ready for all sorts of subtle bugs that will make your threads deadlock or starve. This post talks about all the problems you could run into.

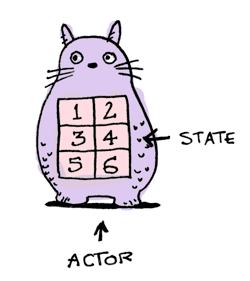

Actors

I love actors! You love actors! Actors are solitary and brooding. Every actor manages its own state:

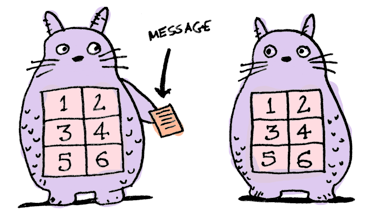

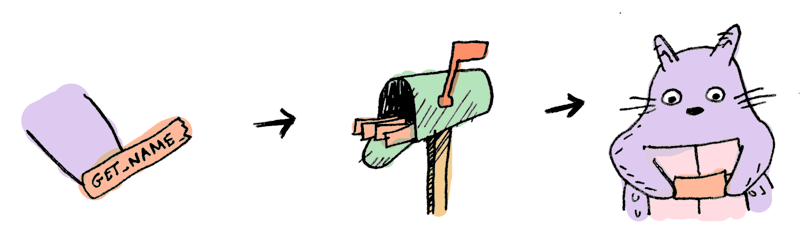

Actors ask each other to do things by passing messages:

Actors never share state so they never need to compete for locks for access to shared data. If actors never block, you will never have deadlock! Actors are never shared between threads, so only one thread ever accesses the actor's state.

When you pass a message to an actor, it goes in his mailbox. The actor reads messages from his mailbox and does those tasks one at a time:

My favorite actor library for Ruby is Celluloid. Here's a simple actor in Celluloid:

class Dog

include Celluloid

def set_name name

@name = name

end

def get_name

@name

end

end

See that include Celluloid? That's all it takes, and now every Dog is an actor!

> d = Dog.new

=> #<Celluloid::ActorProxy(Dog:0x3fe988c0d60c)>

> d.set_name "snowy"

=> "snowy"

Here we are telling the actor, d, to set its name to "snowy" synchronously. Here we instead pass it a message to set the name asynchronously:

d.async.set_name "snoopy"

=> nil

d.get_name

=> "snoopy"

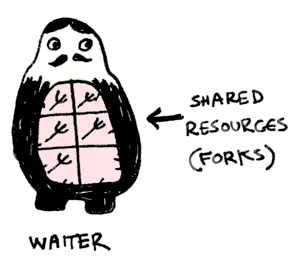

Pretty cool. To solve the dining philosophers problem, we need to model the shared state using an actor. So we introduce a Waiter:

class Waiter

include Celluloid

FORK_FREE = 0

FORK_USED = 1

def initialize(forks)

@philosophers = []

@eating = []

@forks = [FORK_FREE, FORK_FREE, FORK_FREE, FORK_FREE, FORK_FREE]

end

end

The waiter is in charge of forks:

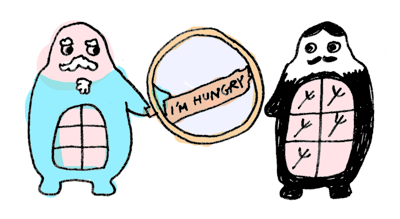

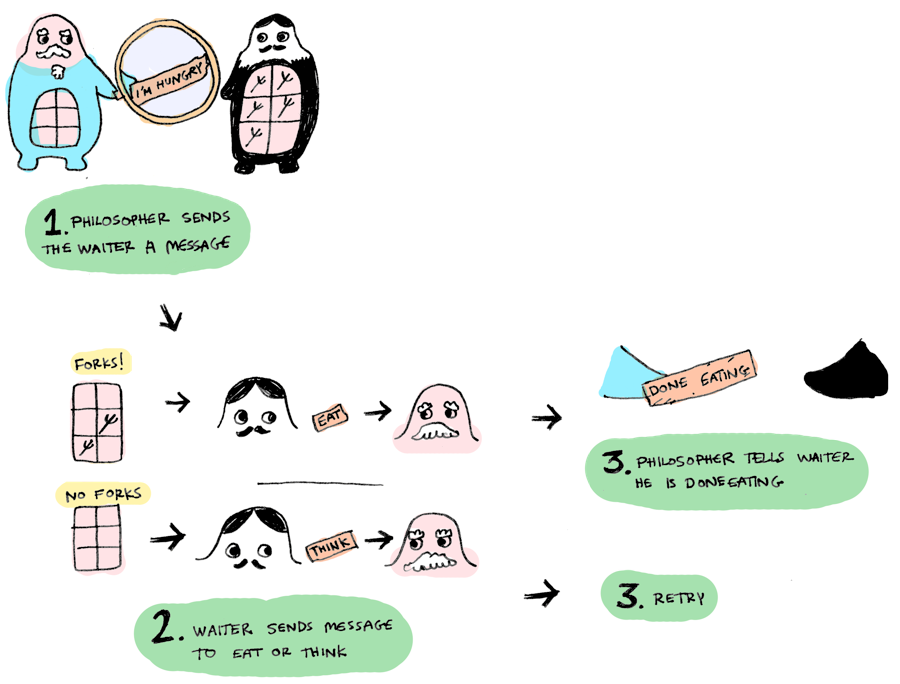

When a Philosopher gets hungry, he lets the waiter know by passing a message:

def think

puts "#{name} is thinking"

sleep(rand)

puts "#{name} gets hungry"

waiter.async.hungry(Actor.current)

end

When the waiter gets the message, he sees if the forks are available.

- If they are available, he will mark them as 'in use' and send the philosopher a message to eat.

If they are in use, he tells the philosopher to keep thinking.

def hungry(philosopher) pos = @philosophers.index(philosopher)

leftpos = pos rightpos = (pos + 1) % @forks.size

if @forks[leftpos] == FORKFREE && @forks[rightpos] == FORKFREE @forks[leftpos] = FORKUSED @forks[rightpos] = FORKUSED @eating << philosopher philosopher.async.eat else philosopher.async.think end end

Full code here. If you want to see what this looks like using locks instead, look here.

The shared state is the forks, and only one thread (the waiter) is managing the shared state. Problem solved! Thanks Actors!

Software Transactional Memory

I'm going to use Haskell for this section, because it has a very good implementation of STM.

STM is very easy to use. It's just like transactions in databases! For example, here's how you pick up two forks atomically:

atomically $ do

leftFork <- takeFork left

rightFork <- takeFork right

That's it! No need to mess around with locks or message passing. Here's how STM works:

- You make a variable that will contain the shared state. In Haskell this variable is called a

TVar:

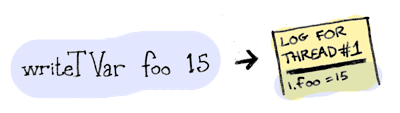

You can write to a TVar using writeTVar or read using readTVar. A transaction deals with reading and writing TVars.

- When a transaction is run in a thread, Haskell creates a transaction log that is for that thread only.

- Whenever one a block of shared memory is written to (with

writeTVar), the address of theTVarand its new value is written into the log instead of to theTVar:

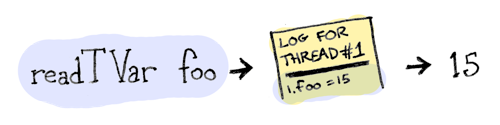

- Whenever a block is read (using

readTVar):- first the thread will search the log for the value(in case the TVar was written by an earlier call to writeTVar).

- if nothing is found, then value is read from the TVar itself, and the TVar and value read are recorded in the log.

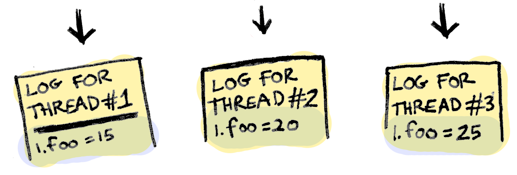

In the meantime, other threads could be running their own atomic blocks, and modifying the same TVar.

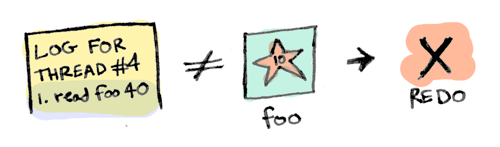

When the atomically block finishes running, the log gets validated. Here's how validation works:

we check each readTVar recorded in the log and make sure it matches the value in the real TVar. If they match, the validation succeeds! And we write the new value from the transaction log into the TVar.

If validation fails, we delete the transaction log and run the block all over again:

Since we're using Haskell, we can guarantee that the block had no side-effects...i.e. we can run it over and over and it will always return the same result!

Haskell also has TMVars, which are similar. A TMVar either holds a value or is empty:

You can put a value in a TMVar using putTMVar or take the value in the TMVar using takeTMVar.

- If there you try to put a value in a

TMVarand there's something there already,putTMVarwill block until it is empty. - If there you try to take a value from a

TMVarand it is empty,takeTMVarwill block until there's something in there.

Our forks are TMVars. Here are all the fork-related functions:

newFork :: Int -> IO Fork

newFork i = newTMVarIO i

takeFork :: Fork -> STM Int

takeFork fork = takeTMVar fork

releaseFork :: Int -> Fork -> STM ()

releaseFork i fork = putTMVar fork i

A philosopher picks up the two forks:

(leftNum, rightNum) <- atomically $ do

leftNum <- takeFork left

rightNum <- takeFork right

return (leftNum, rightNum)

He eats for a bit:

putStrLn $ printf "%s got forks %d and %d, now eating" name leftNum rightNum

delay <- randomRIO (1,3)

threadDelay (delay * 1000000)

putStrLn (name ++ " is done eating. Going back to thinking.")

And puts the forks back.

atomically $ do

releaseFork leftNum left

releaseFork rightNum right

Actors require you to restructure your whole program. STM is easier to use – you just specify what parts should run atomically. Clojure and Haskell both have core support for STM. It's also available as modules for a lot of other languages: C, Python, Scala, JavaScript etc etc.

I'm pretty excited to see STM used more!

Conclusion

Locks

- available in most languages

- give you fine-grained control over your code

- Complicated to use. Your code will have subtle deadlock / starvation issues. You probably shouldn't use locks.

Actors

- No shared state, so writing thread-safe is a breeze

- No locks, so no deadlock unless your actors block

- All your code needs to use actors and message passing, so you may need to restructure your code

STM

- Very easy to use, don't need to restructure code

- No locks, so no deadlock

- Good performance (threads spend less time idling)

References

- Dining Philosopher implementations in different languages

- Simon Peyton Jones explains STM

- Carl Hewitt explains Actors

- On Akka, Erlang, and ATOM by tarcieri

For more drawings, check out Functors, Applicatives, and Monads in pictures.